Introduction

As data and analytics models become increasingly used for both human decision making and automated processes, reliable alerting has become an essential component of the modern data stack. In this post I discuss ways to achieve it and introduce Great Expectations from the team at Superconductive, a tool which introduces a solution to create alerts based on data.

Alerting in traditional software services

The software engineering space is packed full of alerting platforms, and has been for a long time. Software services are the backbone of many organisations, and being aware of their health is vital for teams to take timely action in case of an incident. Most software services are exposed through API’s, and there are two main ways to identify failure. The first is through an exception tracking platform which alerts based off of exceptions raised by the code running inside the service.

The problem with the first form is that it relies on the service to be running. If an application has truly failed, this is ineffective. To solve this issue, we need alerting that is decoupled from the service itself, testing its output, in this case its API availability. This is the second form and is commonly called a health check, which involves polling the service’s endpoint. This form of alerting is the most effective at catching outages, and is therefore used in networking to ensure requests are only ever directed to healthy instances.

Alerting in data services

The first form of alerting used in traditional software services also applies to all data services. Exception-based alerting can and should exist across the entire data stack for the same reasons as in traditional software systems. The second form of alerting however, decoupled alerting, must be applied differently.

The output of most data services is not an API endpoint (but if it is, you should definitely be using a health check outlined above). The output of most data services is data. This could be a csv file in an object store, a table or set of rows in a database, or in-memory data about to be passed to another service. While the existence of the output alone provides a good assessment of the underlying service’s operation, its shape does even more so.

Great Expectations

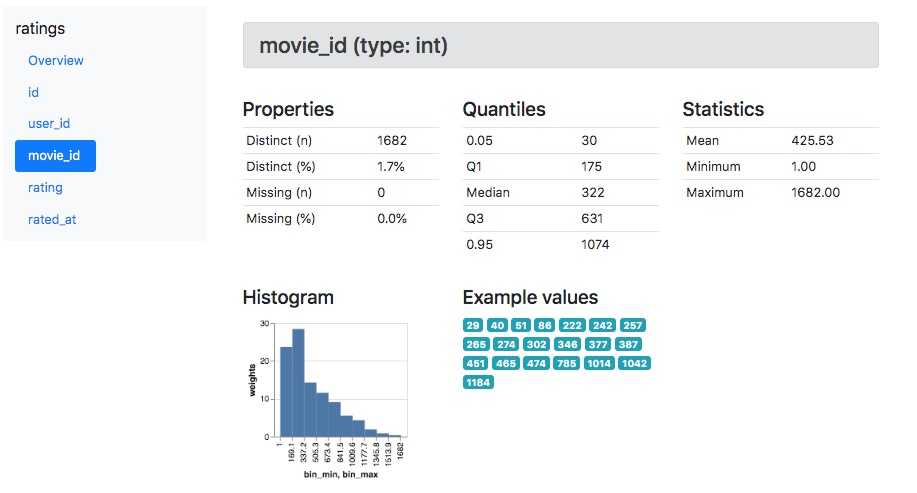

Great Expectations is an open-source project, aiming to solve this problem with a data-native alerting system which can validate not just the existence of data but its shape. It achieves this through ‘expectations’. An individual expectation asserts the shape of a dataset against a threshold which if exceeded results in an alert.

There are an enormous number of ways to measure the shape of a dataset. Fortunately Great Expectations support most useful ones, and you can read about them in more detail here. Great Expectations is also compatible with the most commonly used output destinations for data services, such as file stores, data warehouses, and in-memory data formats too.

Use Cases

As a data engineer, the most valuable use I have achieved with Great Expectations is latency or ‘staleness’ alerting. The tool is probably overkill for this use case alone, but I like that it is easily configurable and I can use it to perform more complex checks simultaneously. Having decoupled alerting from the processes that move and transform data is really valuable, and has caught otherwise silent issues on several occasions.

The array of statistical assertions that Great Expectations can make is primarily aimed at monitoring the output of data models. The inner workings of data science models are completely opaque, and so performing automated checks on their output should be part of the process of deploying a new model. Profiling makes this quick to set up, and helps you work out what measures to alert on.

Lastly, the tool can be used to detect unusual activity in the data produced by other services. For example you could have a business process and want to create alerts based on the shape of the events in that process, such as unusual user activity patterns.

There are several case studies on the Great Expectations blog which are an interesting read for ideas.

Setup

Great Expectations is installed as a Python package. Configuration at the minimum requires the basic understanding of the command line, some Python concepts, how to connect to your data source and interaction with a Jupyter notebook. The getting started guide is really helpful and the level of entry is suitable for analysts, though they might require some assistance with getting set up for local development.

Deploying does require engineering time. While Great Expectations can be invoked manually through the CLI, this defeats the point of automated alerting. In production, an orchestrator and scheduler is necessary to execute the tasks. Most teams use Airflow, where a BashOperator, PythonOperator or KubernetesPodOperator or others would be suitable. A concept called ‘Checkpoints’ allows expectations to be logically grouped and executed together, making it easy to run different schedules or alerting for distinct monitoring tiers.

Alerts can currently be directed at either Slack or Pagerduty. Pagerduty alerts are a contribution I made as we increasingly relied upon Great Expectations for alerting on our tier 1 data applications at Tails.com. The Superconductive team wrote a blog post on the contribution which you can read here, and you can read how to configure alerting in the docs.

Alternatives

The Great Expectations docs are very transparent about what the tool cannot and is not intended to achieve. Most crucially, it relies on a Python environment. If you use R or Tensorflow there are two alternatives mentioned in the docs which are worth looking into:

In Summary

Alerting is one of the many areas in which data is beginning to catch up to traditional software services. Great Expectations should definitely be a consideration for any modern data stack as a means to create decoupled, data-native alerting. A deal-breaker for some may be that at this stage Great Expectations is provided as host-your-own only. If you are using a fully managed data management platform, you likely won’t have a scheduler to orchestrate tasks with. Finally, I’d recommend joining the community on Slack for any specific questions to your stack, where there are over 1,500 members at the time of writing.